-

a

-

Department of Architecture, Graduate School of Engineering, The University of Tokyo, Tokyo, Japan

-

b

Lyles School of Civil Engineering, Purdue University, West Lafayette, IN, USA

文章摘要:Abstract

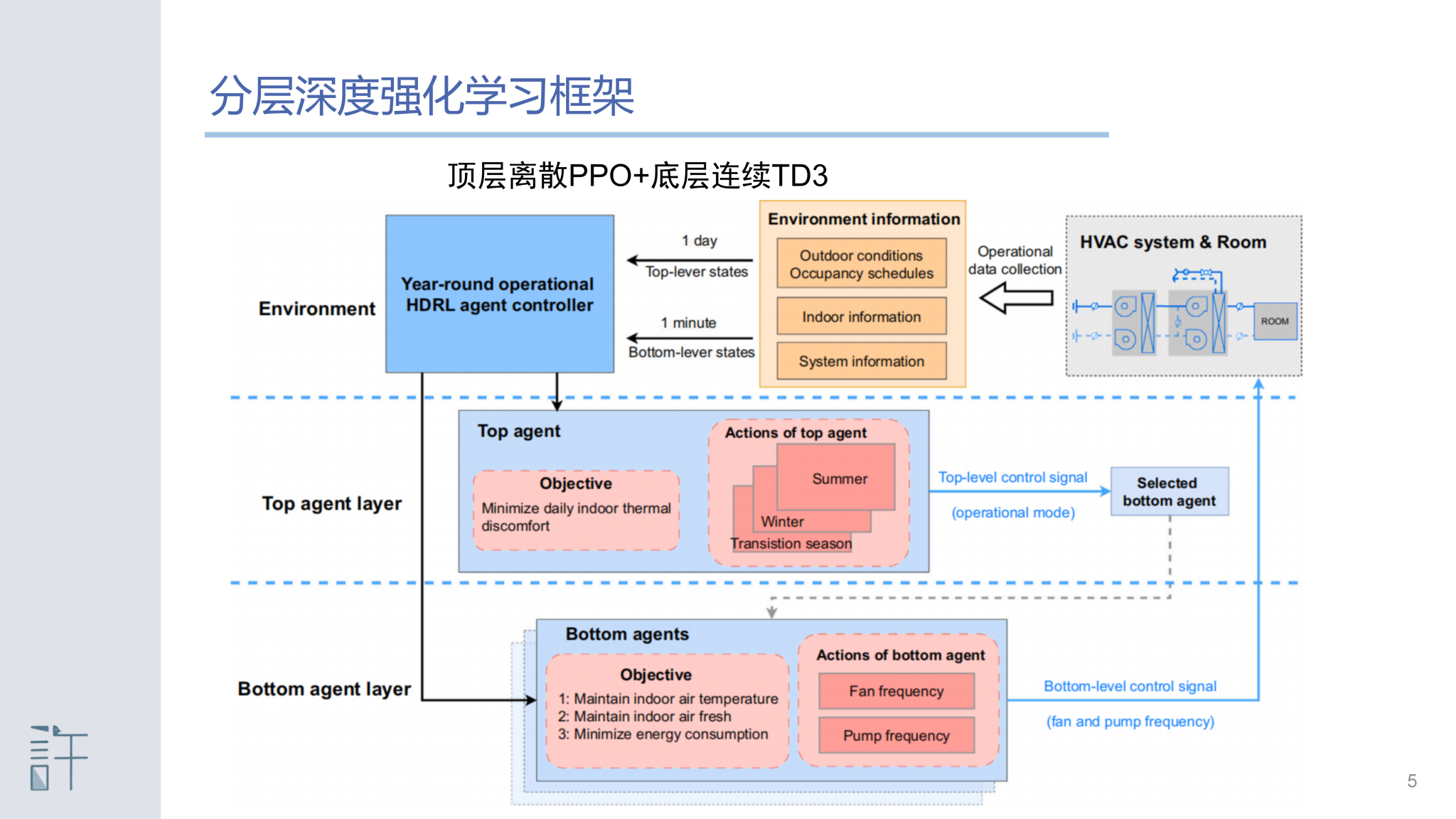

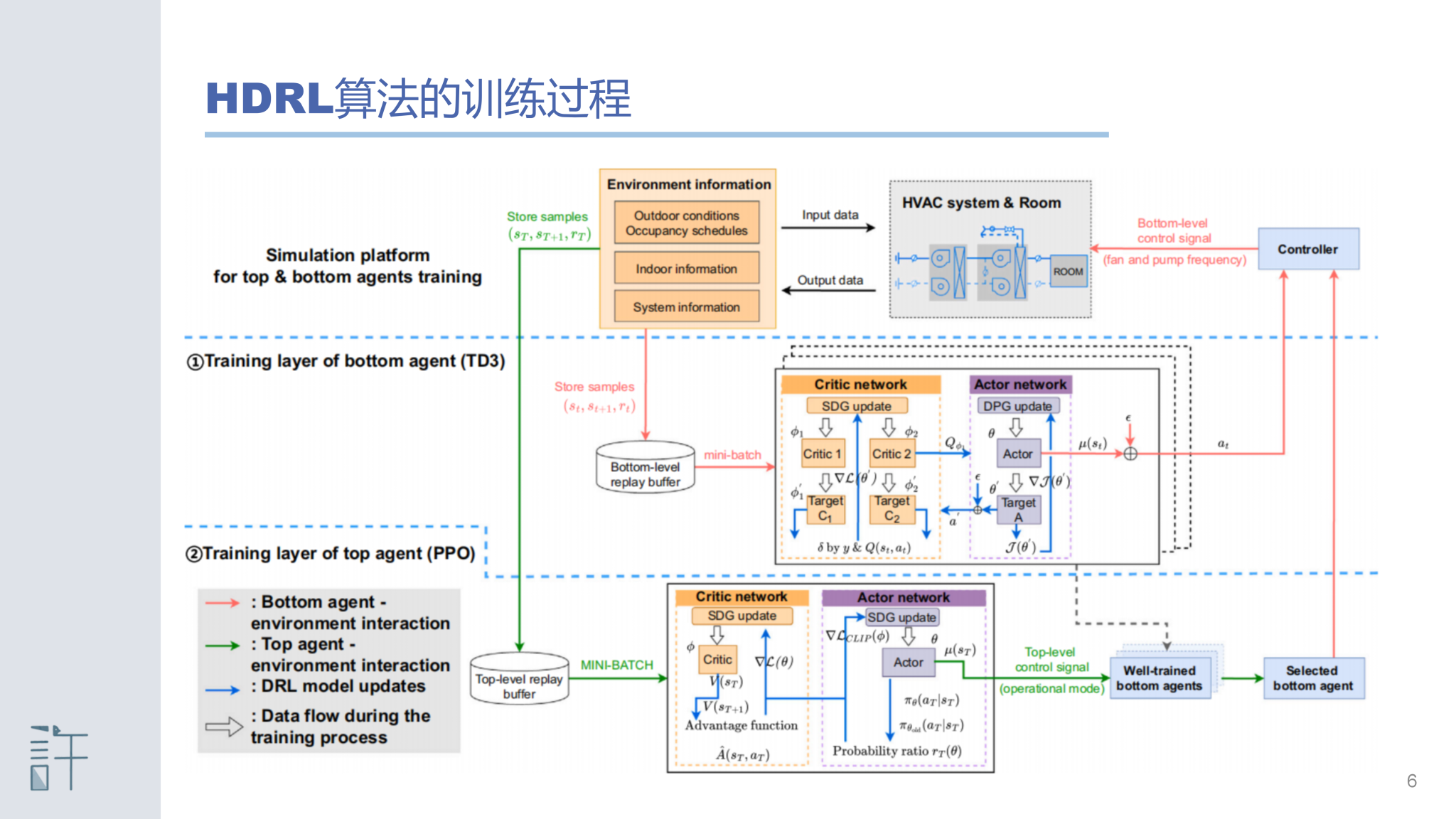

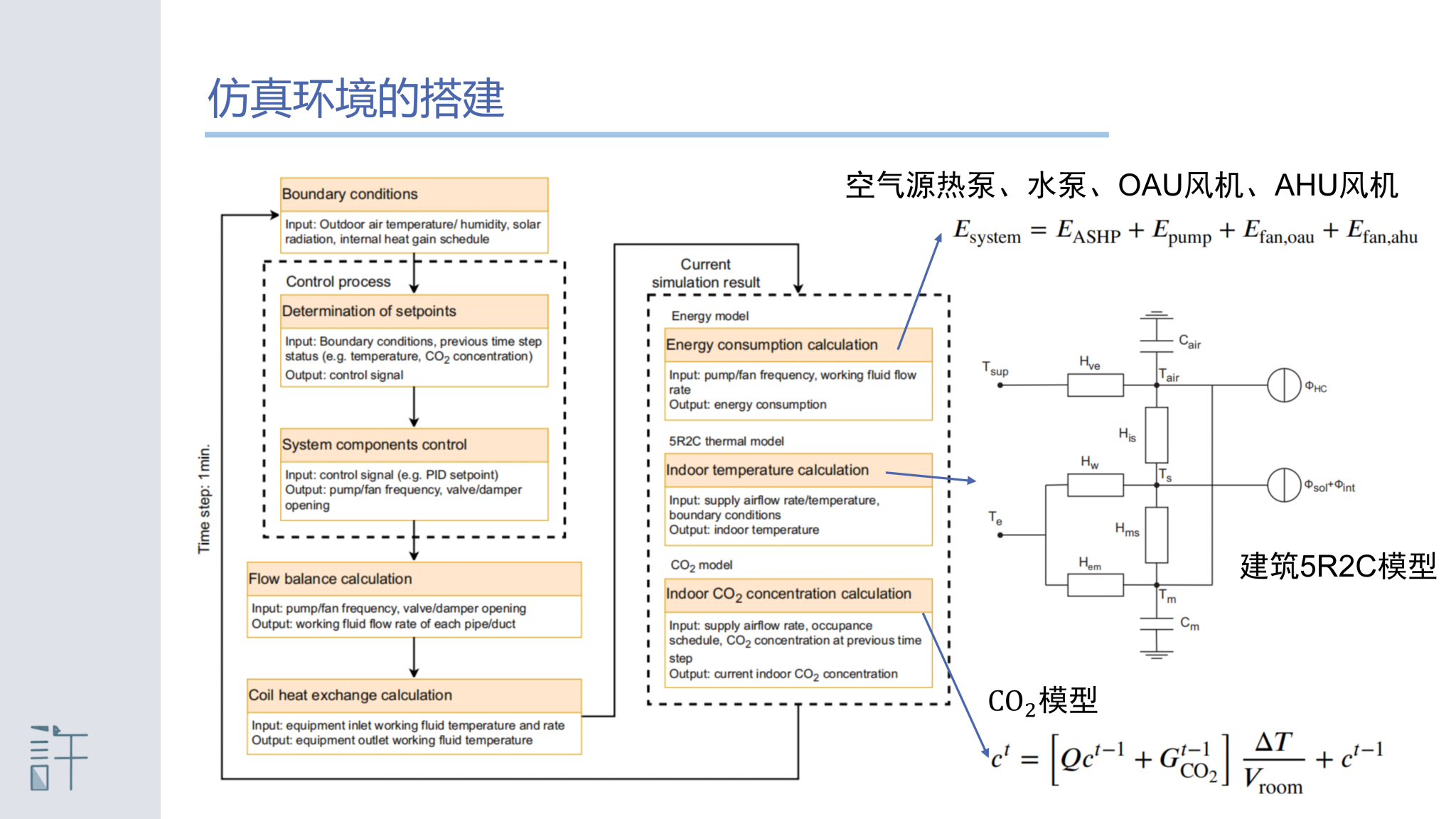

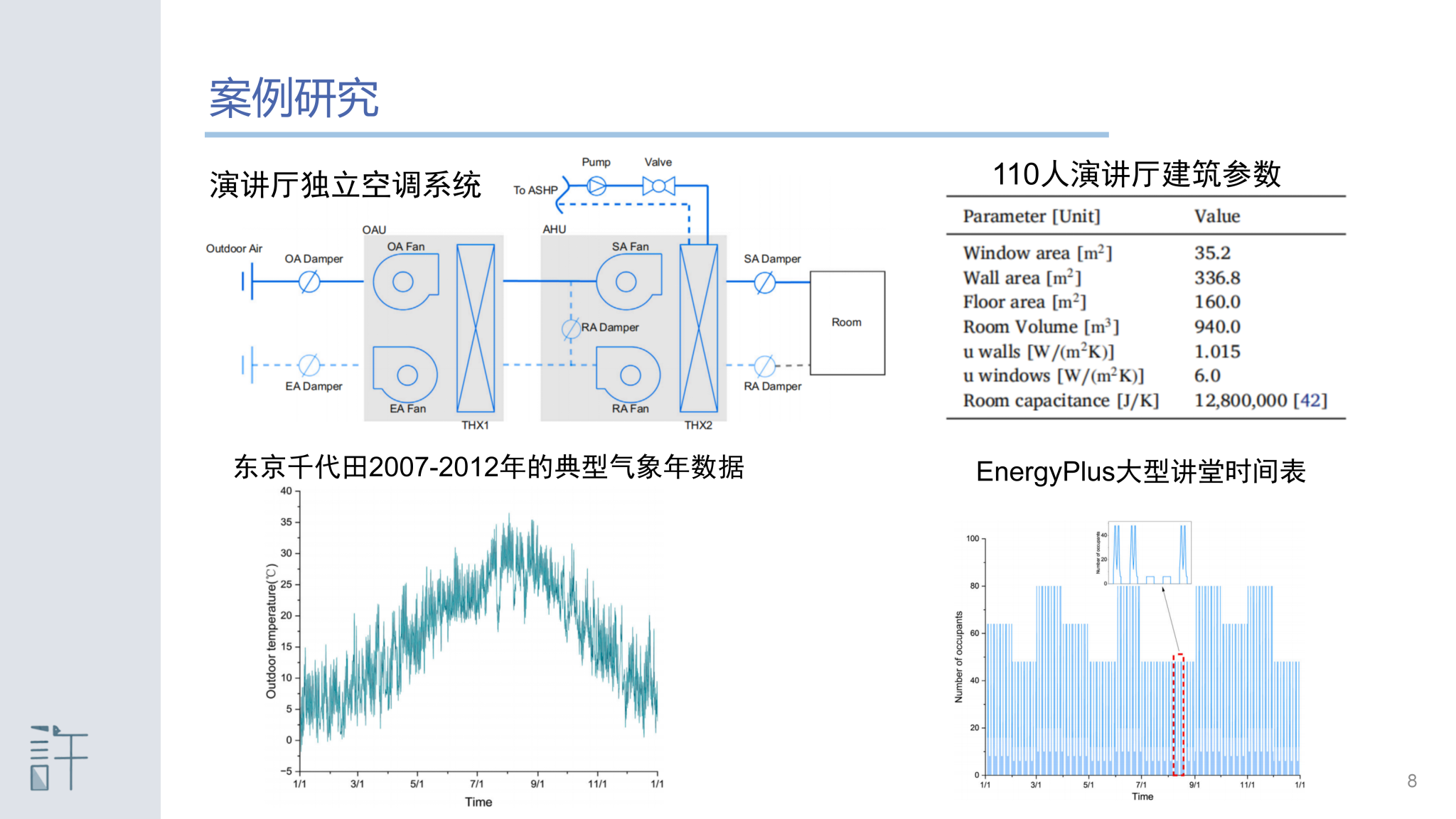

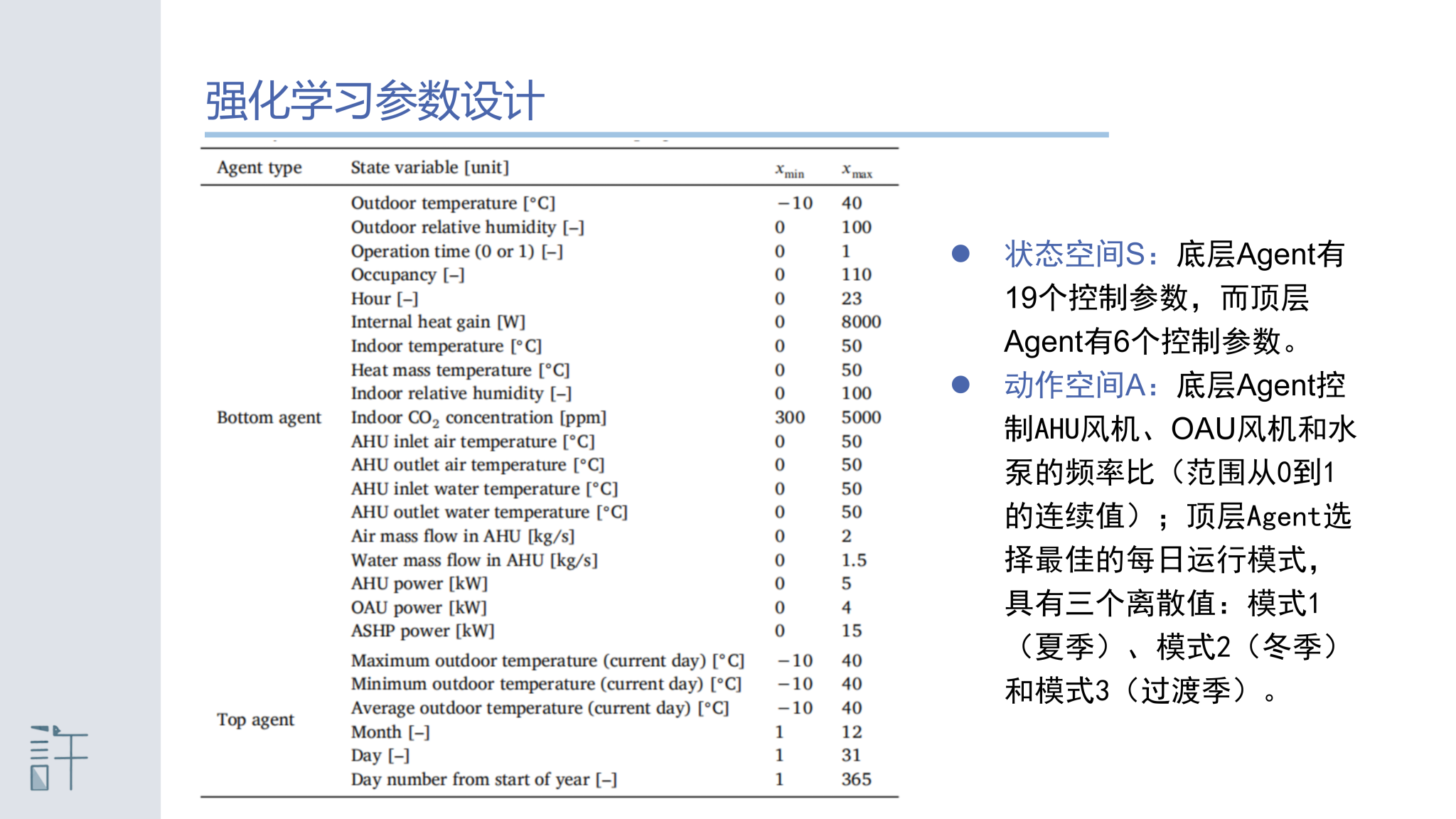

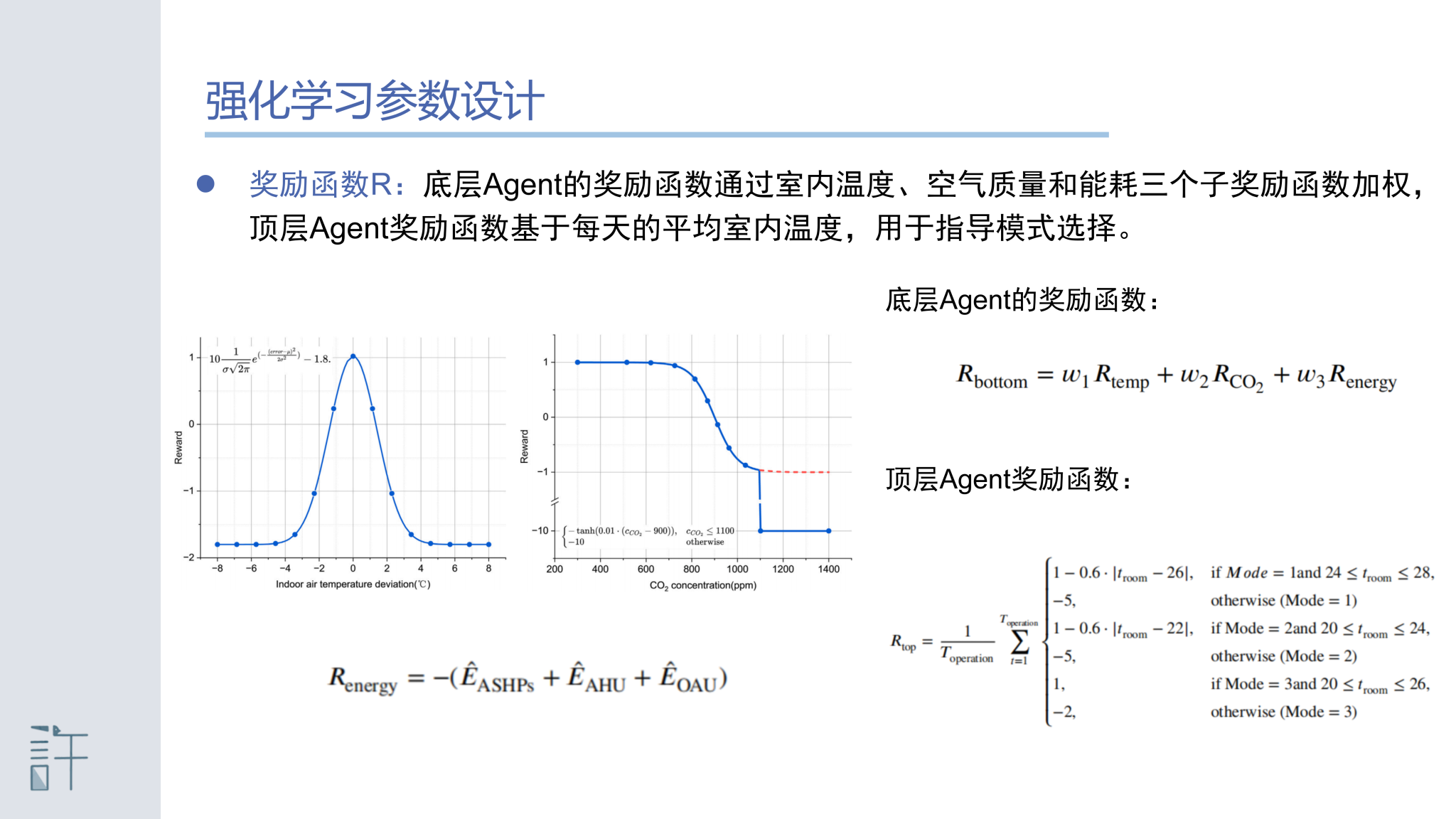

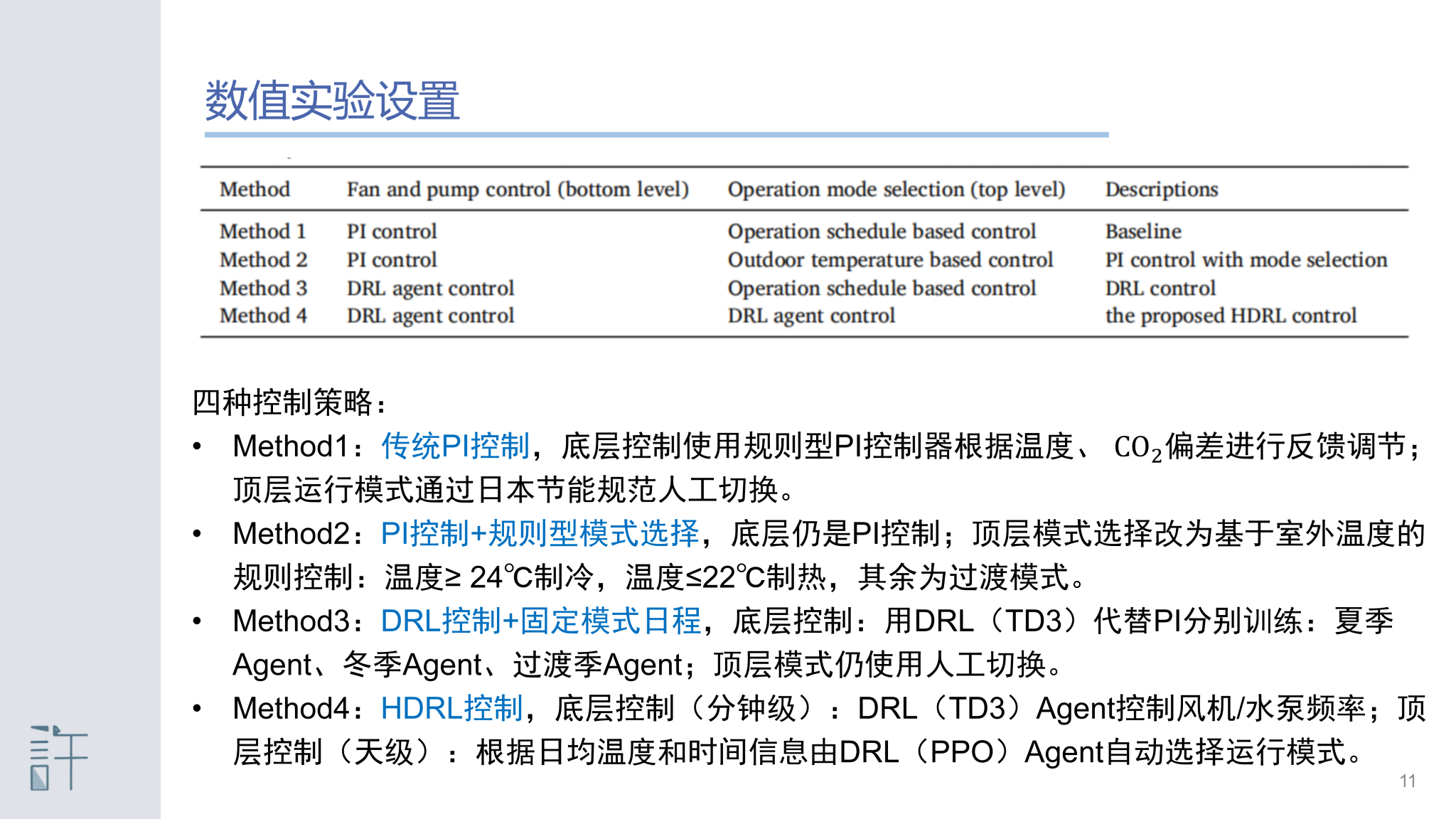

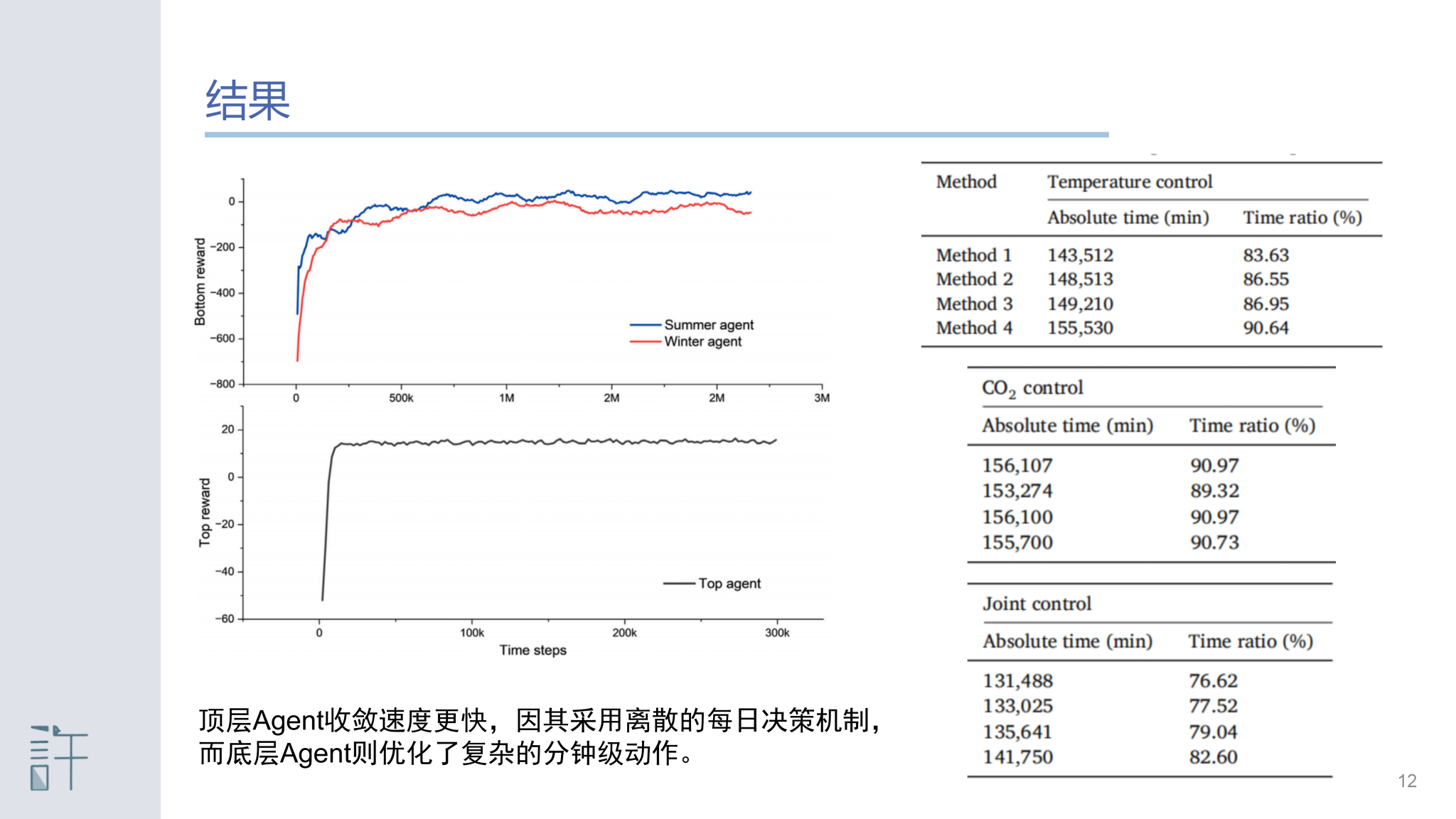

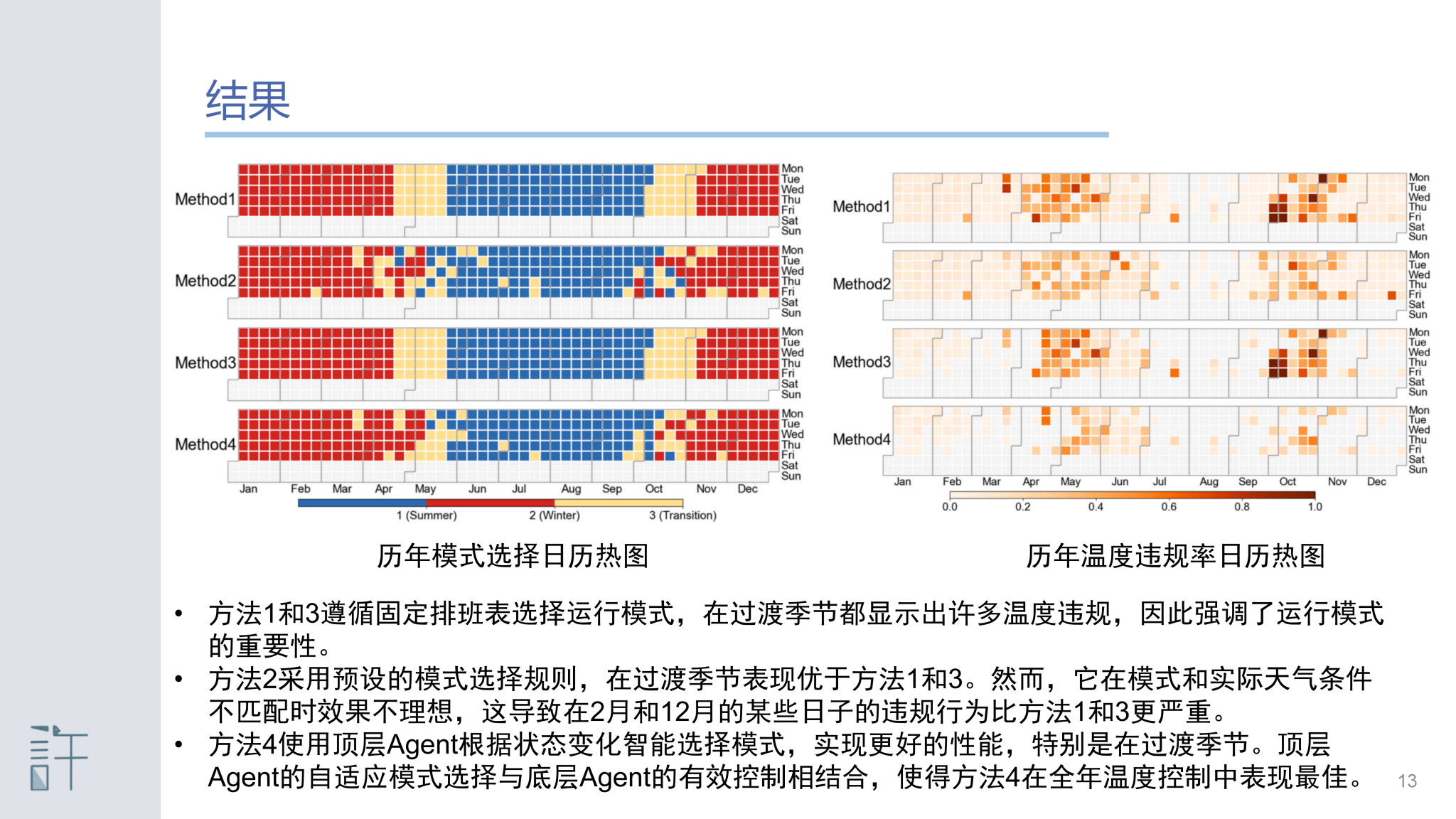

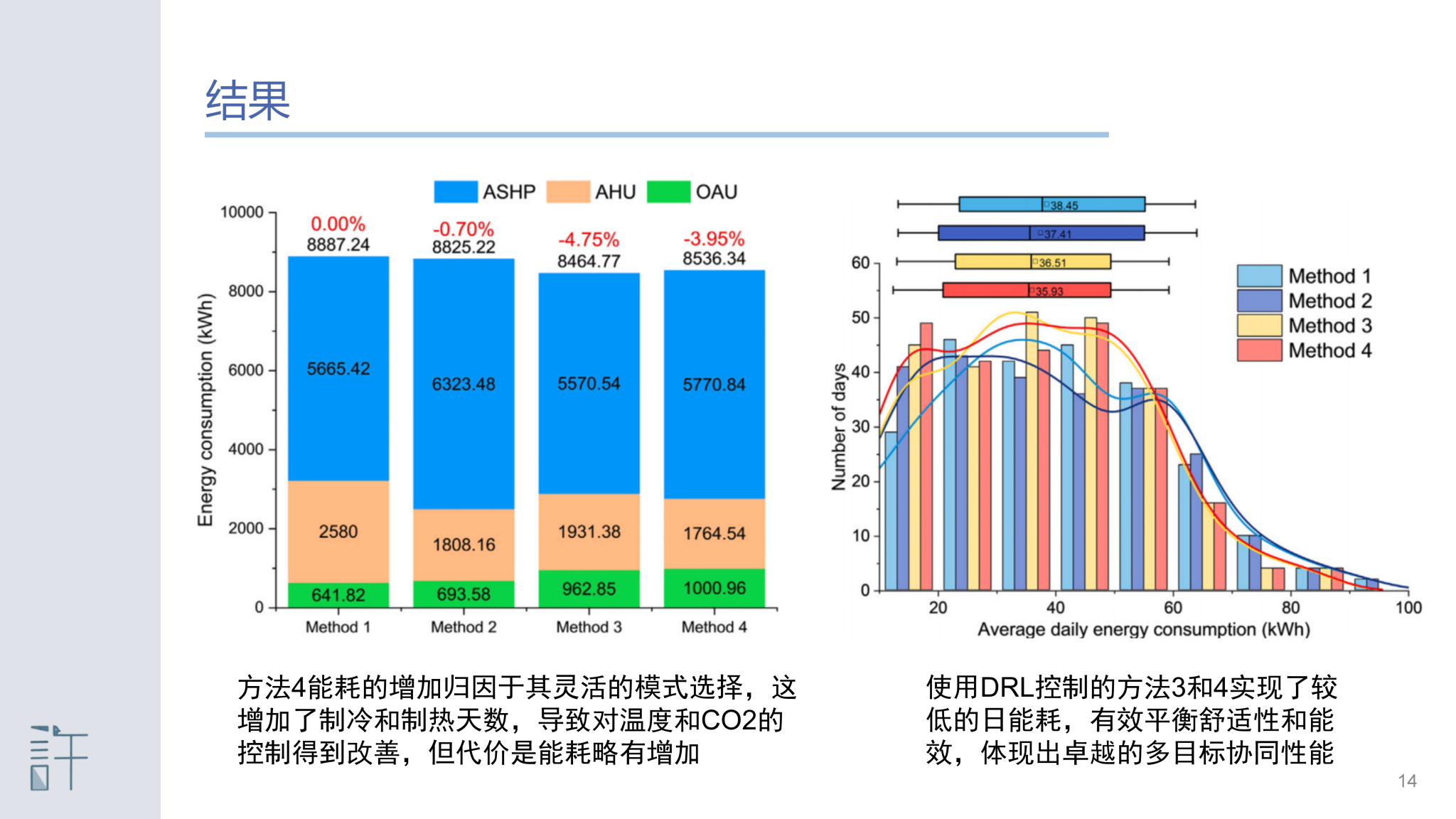

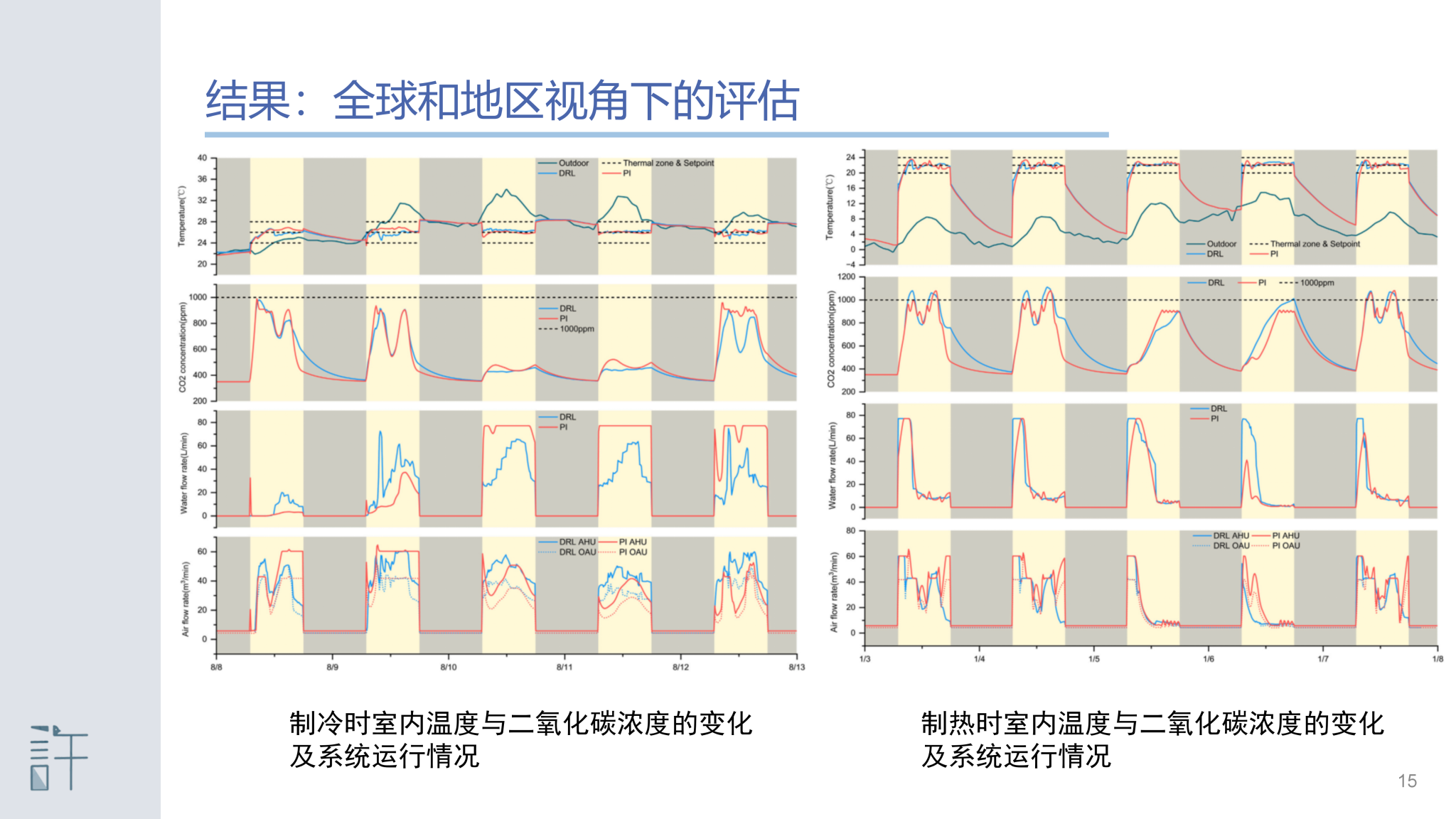

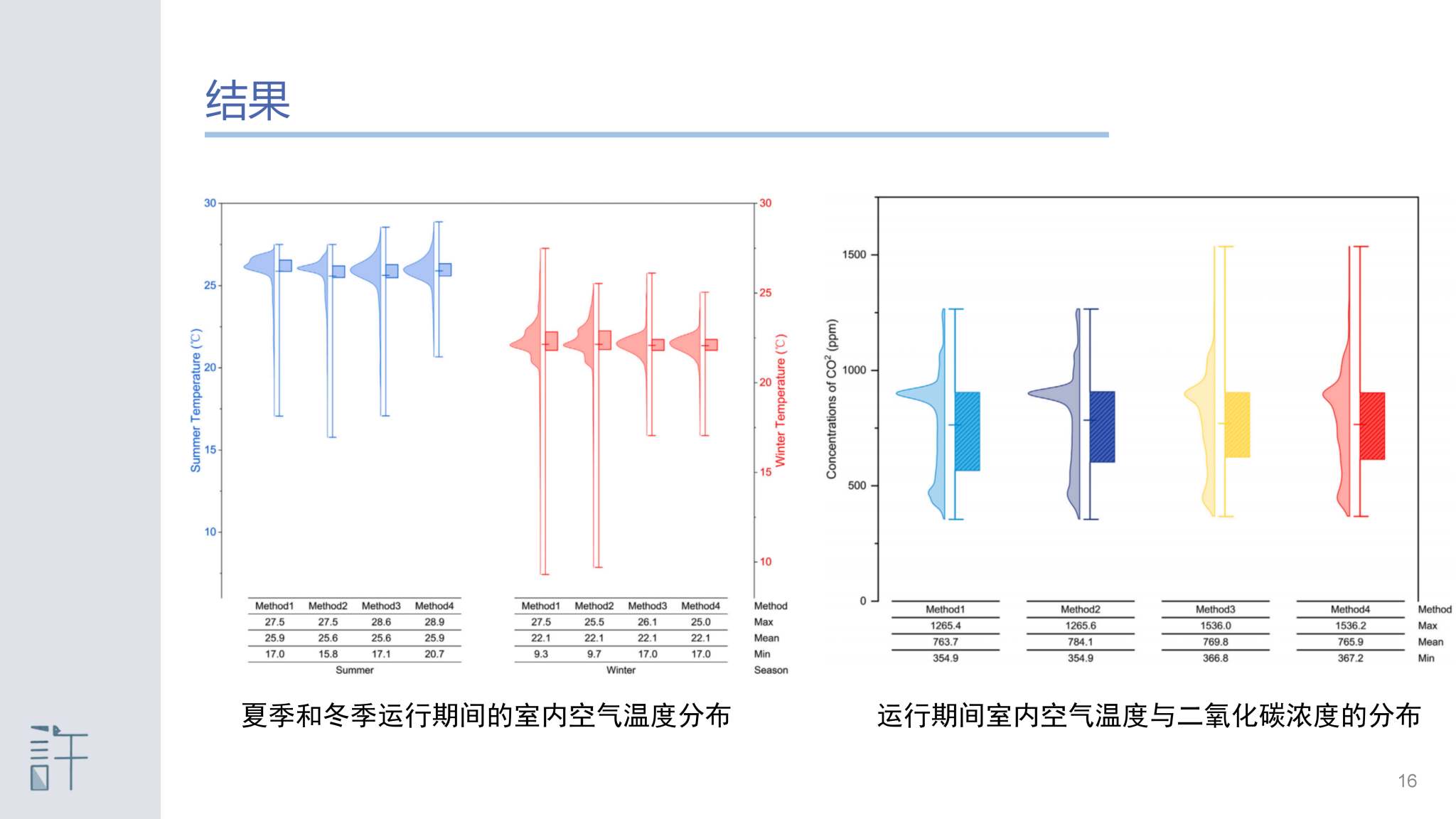

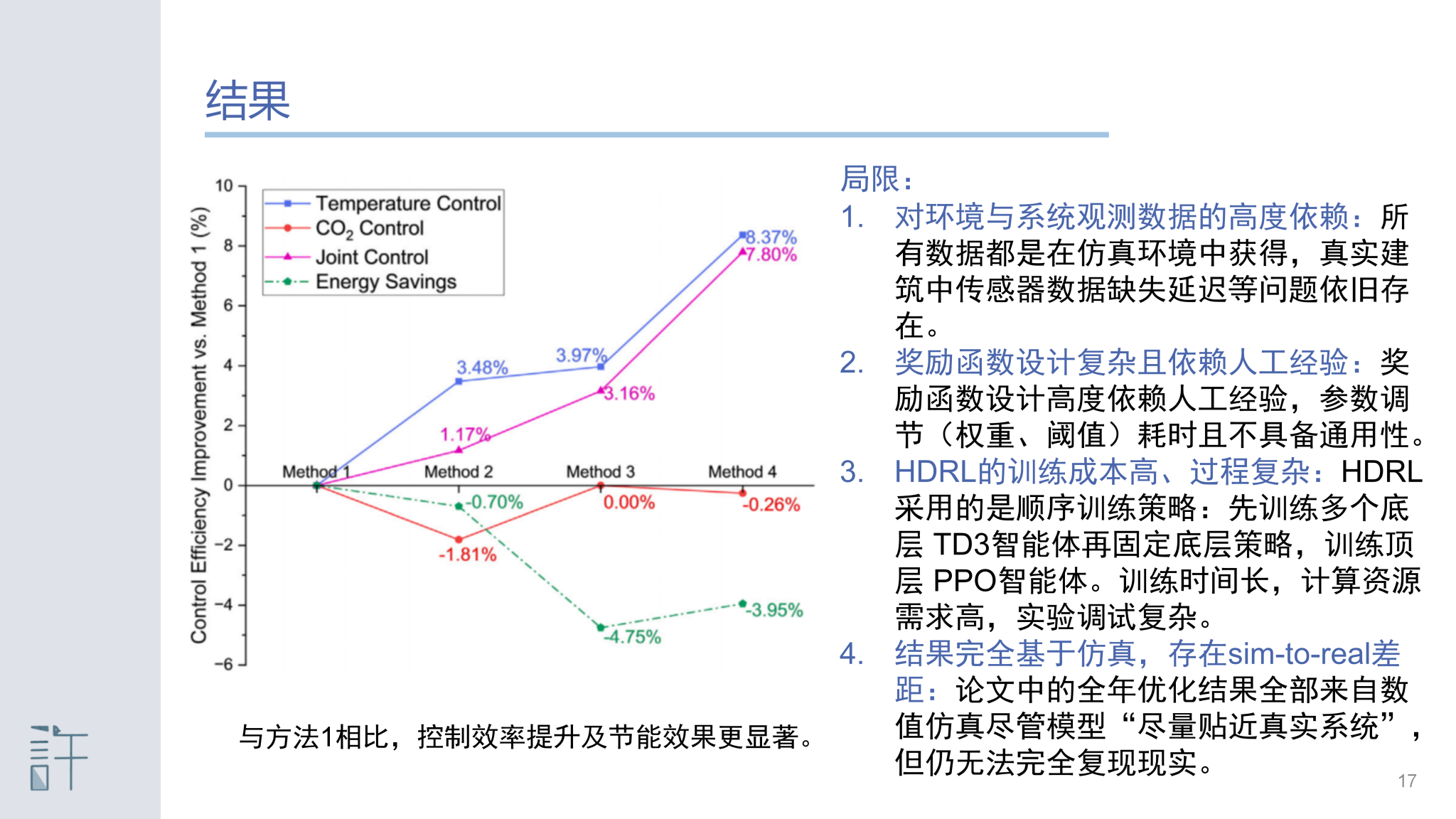

Balancing user comfort demands with energy consumption in heating, ventilation, and air conditioning (HVAC) systems is a critical challenge in the field of control optimization. Currently, deep reinforcement learning (DRL) algorithms have achieved some success in optimizing HVAC system control. However, training agents with existing algorithms becomes challenging, when dealing with the hybrid action control problem. This study proposes an HVAC system optimization control method based on hierarchical deep reinforcement learning (HDRL), which aims to minimize system energy consumption while ensuring indoor comfort and maintaining air quality. The proposed HDRL method employs a two-layer agent architecture, where the bottom agent is responsible for optimizing the frequency control of fans and pumps and the top agent flexibly selects the daily operating mode based on outdoor meteorological conditions. We designed a multi-objective reward function based on Gaussian distribution, hyperbolic tangent function, energy consumption normalization for bottom agents, and temperature-based reward function for the top agent to achieve the reliable training of the agents. Experimental results indicated that the HDRL method significantly improves indoor temperature and CO2 concentration control during the year-round operation of the HVAC system. Compared to conventional PI control, the temperature compliance rate is increased by 8.37 %, and the combined temperature and CO2 compliance rate is improved by 7.8 %, while achieving a 3.95 % reduction in energy consumption.

声明:本网站内容版权归许鹏教授课题组所有。未经本课题组授权不得转载、摘编或利用其它方式使用上述作品。